There is no AI Firing Boom

AI is not putting people out of jobs. But it is a convenient wand to wave when you’ve been leading your organization poorly and have accumulated unnecessary headcount. As Atlassian and Block did. It has even gotten its own word: “AI-washing.” Klarna meant it and did really fire customer support staff and replaced them with AI. Their customer satisfaction plummeted, and they wised up and re-employed humans. That is known as “The Klarna Effect.”

The Economist has analyzed AI-driven productivity gains. 41% of U.S. workers now use it, usage is about 5.7% of work hours, and productivity gain is about 15% on realistic tasks. That points to a productivity gain of about 0.4% across the enterprise. The real-life benefit is smaller. Some studies suggest that when people have used an AI to solve a problem, they celebrate with an extra-long coffee break, totally negating the benefit.

So when the CEO asks you as an IT leader how much you can reduce headcount with AI, the true answer is 0%. The answer you need to give to keep your job will be higher.

AI Gets Adult Supervision

Amazon is implementing adult supervision for their AI. About time.

AWS has suffered a number of embarrassing outages lately. The common theme has been AI-assisted changes made by junior developers. To nobody’s surprise, it turns out that inexperienced humans are not good at evaluating the sometimes convoluted solutions suggested by the machine.

The solution AWS is implementing is to require sign-off by more senior developers.

If you are running serious systems and care about uptime, vibe coding without an experienced human in the loop is not the solution.

The Rest of the World is Different

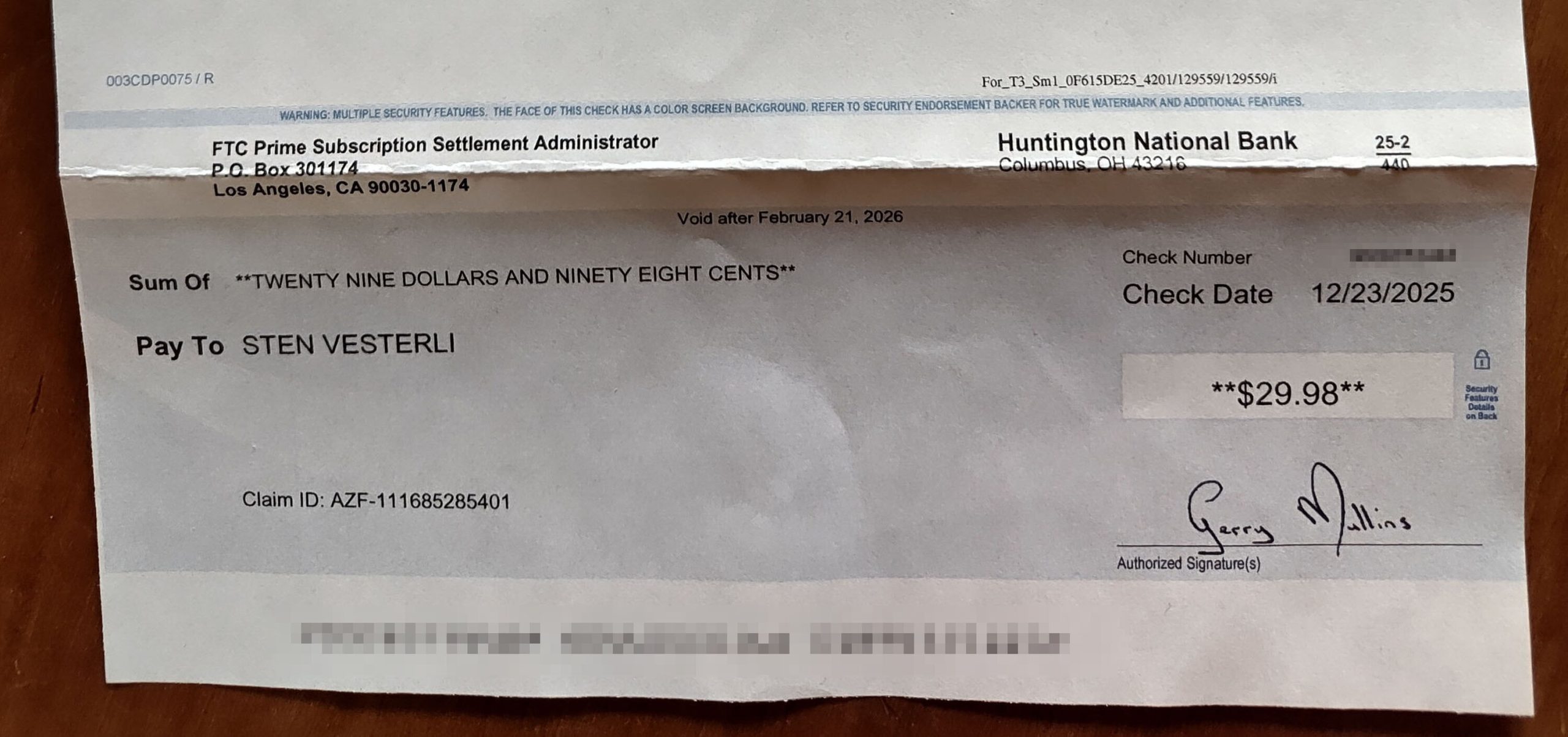

I just received my settlement cheque from the FTC. Apparently, as an ex-Amazon Prime member, I have been injured by their deceptive practices and am entitled to 29 dollars and 98 cents. And I receive a paper cheque. No bank in Denmark will cash that – cheques were discontinued in Denmark a decade ago. To get my $29.98, I would have to travel to the U.S., which for obvious reasons won’t happen.

We assume that the rest of the world operates like we do. It doesn’t. Germany still has fax machines. I don’t know how things are done in South Korea or China, but it is a safe bet that I cannot imagine it.

If you want to address the world, you need someone who knows each part. Distance is not dead.

Overconfidence

40 years ago, when I started dating my wife, I was the confident one. She was the one with the best sense of place and direction. We did get lost quite a few times before she realized I was over-confident in my own abilities, and I learned that her wayfinding skills were superior to mine.

It is a common human trait to defer to (possibly misplaced) confidence. IT already suffers from this, as anyone subjected to the latest fad in IT development or architecture can attest.

But with AI, this problem is magnified a hundredfold. Total nonsense and sharp analysis are both presented with utter confidence. For any important decision, take some time to think it through yourself. Or at least ask another AI to criticize the decision of the first one.

Spreadsheet Optimizing

The train drivers here in Denmark are getting sicker and sicker, and it’s the computer’s fault. Specifically, it is the fault of the new fancy train driver schedule optimization software that our blundering national railway company has ineptly implemented.

It optimizes away people’s breaks, violates union-agreed rules, and fragments a train driver’s working day into running four different trains in a day instead of one or two.

It is a classic case of Spreadsheet Optimizing. Someone who doesn’t know the real world or cares about the people doing the work will twiddle with a spreadsheet or other software to gain a theoretical 1-2% benefit, but will realize a 10-20% loss.

In theory, there is no difference between theory and practice. In practice, the difference is huge.

Do You Test Your AI?

How do you test the AI you use? All the major providers are constantly leapfrogging each other, and if you don’t investigate their improving capabilities, you are at risk of missing out.

My personal test suite for LLM chatbots includes playing chess against them. Two years ago, they could manage 5-7 moves before they made an illegal or stupid move. The best LLMs are now up to 20-25 moves.

In a professional coding setting, you should create a test suite of relevant coding tasks on your codebase. For example, giving each AI a block of code and asking for a security and performance review. You’ll find a big difference between engines, and all of them are rapidly improving.

Have someone on your team test the current crop of AI tools regularly. Rotate the task between team members – they will each test different aspects.

Forcing AI

Tech companies like Amazon, Meta, and Google have begun forcing AI tools down the throats of unwilling engineers. Managers are presented with dashboards showing how their underlings use AI. In some places, insufficient enthusiasm for AI tools will count against you in your next performance review.

That is an amazingly stupid idea. You should reward the outcome, not the way it was achieved. It reminds me of other poorly led organizations that reward those who put in many hours over those who get the same job done faster.

If your AI tools are so great, people will use them of their own volition. Forcing them on people demonstrates that they don’t work as well as advertised.

A Place Where AI Can Help

IBM stock dropped 13% on the news that Claude Code can now refactor COBOL. That might be bad for IBM, but it is good for the wider IT world.

Using an LLM inductively—writing a lot of code from a short prompt—allows it to confabulate over-complex and buggy code. But using an LLM deductively—distilling knowledge from a larger data set—is a place where the AI can do something well that humans are not very good at.

Since the beginning of programming, we have struggled with documentation. Everybody who has been in the industry for a while has experienced being thrown into a swamp of inconsistent, badly documented code with the instruction to fix it.

I’m not afraid that AI will put us all out of a job. We have a gazillion lines of legacy code that need refactoring. That has been prohibitively expensive until now. With modern AI tools, we have a chance to make a dent in the problem.

Who Gets the Blame?

We’re starting to see AI bots doing damage, even in professional organizations. Those of us who work professionally with IT have watched with horrified fascination as gullible end users entrust their Openclaw/Clawdbot with their credentials and credit cards, but that is personal experiments. Now the problem is spreading.

At AWS, the AI bots inherit the authorization of the user running them. That means that if a user is entrusted with the power to delete an environment, the AI bot can also do so. As it did. The outage seems to have been limited to one service in one region. Interestingly, AWS blames the error on the human engineer who let the bot loose, not on the bot. It reminds me of how Boing and Airbus always try to blame all crashes on “Pilot Error.”

Share this story at your next team meeting and discuss if it could happen in your organization. And think about who would get the blame…

Excessive Quality

Excessive quality is “muda” – waste. If you’ve been exposed to Lean, you know the seven types of waste. One of the common ones in IT is overprocessing. Building high-quality software for a temporary need is overprocessing.

Much of the discussion about crappy AI-generated code fails to make the distinction between critical systems that lives depend upon and which will be maintained for decades, and one-off temporary solutions.

Spending scarce human programmer resources on building tactical, short-lived systems is overprocessing. Let the AI build it.