The fear of every Air Traffic Controller is “losing the picture.” That is the situation where your mental model of where everyone is and where they are going starts breaking down. It is very hard to regain the picture, and the controller will ask for help. Usually, the supervisor will split the sector and assign the relief controller to one half of it.

I have often seen IT organizations that have “lost the picture,” but there is never a relief controller available to step in. They simply continue struggling to keep the system running while the bug reports and enhancement requests pile up.

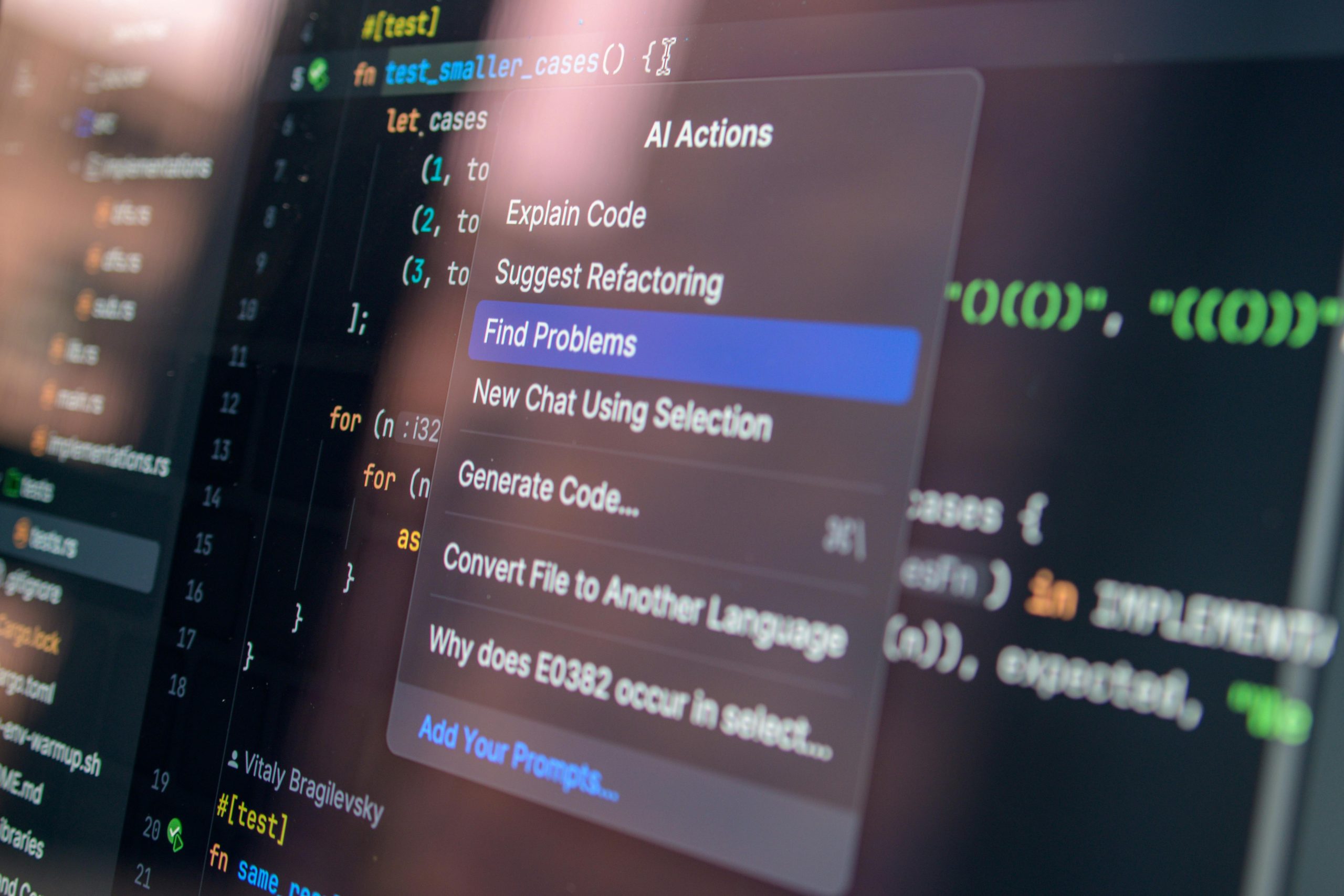

The number one reason why a team loses the picture used to be that the complexity grows until it reaches the limit of what the team can handle, and then the most experienced person leaves. That is hard enough to handle. Today, some teams are happily letting AI tools build code they don’t understand, even from the beginning. They are rapidly losing the picture, as the emerging horror stories about teams drowning in AI code clearly show.